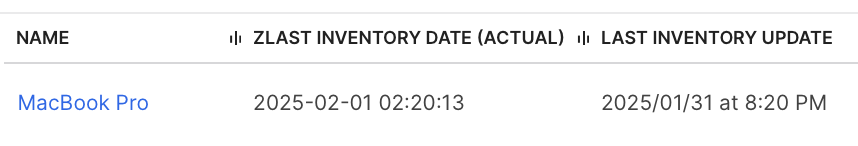

Or rather should I say, “Do you know when they are”? Tell me, Mac Admins, has this ever happened to you: You need to figure out when a computer last submitted inventory to Jamf. Seems easy, right? Just use the built-in Last Inventory criteria, right? Well, if you use the API to update computer records then the moment you do, that becomes the new Last Inventory date. The actual time when a Mac last sent it’s inventory is lost and can now only be loosely correlated with Last Check-In. Not ideal™

Does anyone really know what time it is?

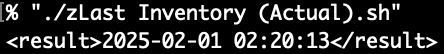

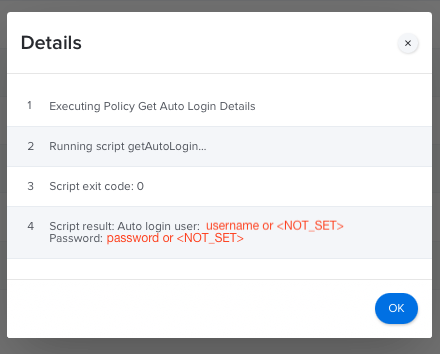

Adding zLast Inventory (Actual) creates a safe harbor for the actual date of an inventory submission. It won’t change no matter how many API writes you make to the computer record. Just create a new extension attribute in your Jamf with an input type of Script and a data type of Date. Leave it in the General category, so it’s nearer to the built-in Last Inventory field in the UI. You might also want to add it as a default column in your Computer Inventory Display preferences and/or use it in Advanced Searches and reports where more reality is desired.

Additional Uses for finding victim’s of “Unknown Error”

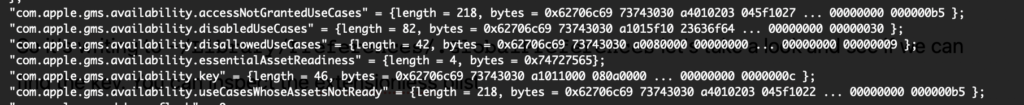

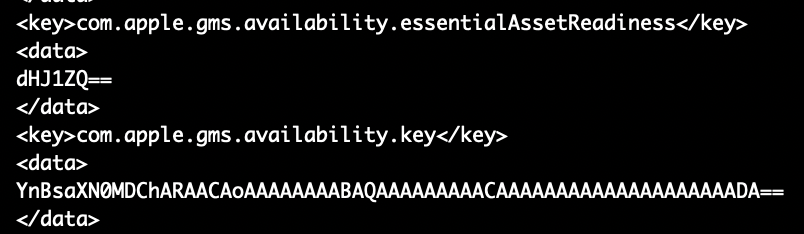

With zLast Inventory (Actual) you may also be able to find Macs that are quietly failing to submit inventory. I came across a few Macs with a cryptic "Unknown Error" message a few months ago. When jamf recon runs it fails to upload the inventory which is bad enough but more insidiously the Last Inventory date in Jamf is updated anyway! The fix seemed to require removing both the Jamf framework on the endpoint and deleting the computer record in Jamf before re-enrolling. If you know what this is let me know! Anyway here it is, all two lines all gussied up with lots of comments.

The extension attribute

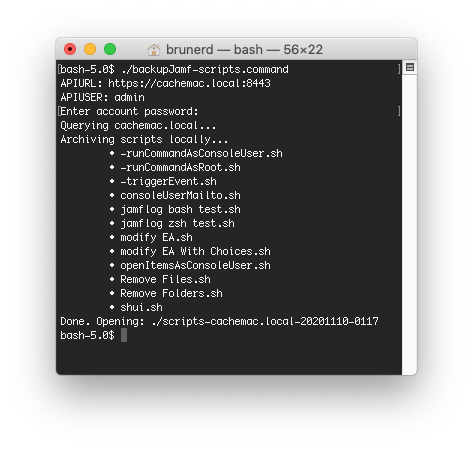

#!/bin/bash

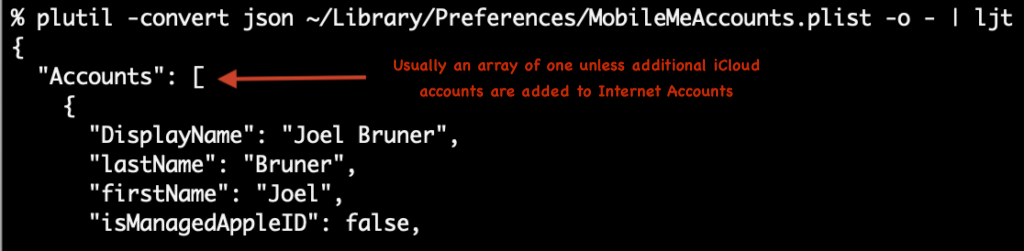

# zLast Inventory (Actual) - Copyright (c) 2025 Joel Bruner (https://github.com/brunerd/macAdminTools/tree/main/Jamf/EAs) Licensed under the MIT License

# A Jamf Pro Extension Attribute to report the _actual time_ of a successful inventory submission

# This is to address two issues with the built-in Last Inventory:

# 1) This date stamp is not affected by API writes to the computer record

# 2) Detect "Unknown Error" inventory submission failures if date does not match built-in Last Inventory date (excluding API writes in History)

# Notes:

# The "z" at the beginning of this EA's name is to ensure it is runs last and is closest to built-in Last Inventory datestamp

# The Jamf EA date type does not consider time zone offsets so we must normalize the time to UTC (-u) for the most consistent behavior

# When viewing an EA date in a Report, Inventory Display, or Computer Record it will not be localized according to Preferences as built-in dates are

#MySQL DATETIME format with time normalized to UTC (YYYY-MM-DD HH:MM:SS)

DATE_NORMALIZED=$(date -u +"%F %T")

echo "<result>${DATE_NORMALIZED}</result>"Ideas in Waiting

Apparently, my “maximizer” self can’t just write a dang two-line script without also thinking of all the “Jamf Ideas” that either need to be created and or upvoted in relation this. I encourage you to take a look at these and vote them up too.

- “Inventory Time becomes incorrect with API (JN-I-16064)” – The “raison d’être” of this blog post, it shouldn’t be called Last Inventory if API writes are going to affect it also. This feature request dates back to May 22, 2014 (and I’m sure there are FRs that pre-date that!) It’s status is “Future Consideration”: At almost 11 years later, the future is here now Jamf!

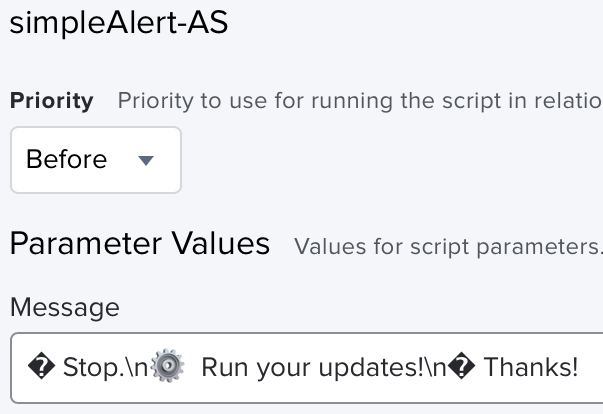

- “Localize Extension Attribute dates in the UI according to Account Preferences (JPRO-I-1068)” – As you can see above, the dates from Extension Attributes are not localized like the “built-in” dates of Last Update and Last Inventory. Why not?

- “Expanded parsing of date and time formats in extension attribute(JPRO-I-1078)” – Jamf hitched it’s wagon to MySQL’s

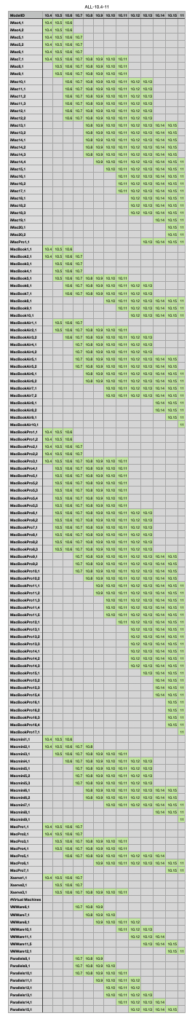

DATETIMEformatYYYY-MM-DD HH:MM:SSwhich was introduced in 1999 and has no provisions for time zone. In 2025 this seems somewhat rudimentary. Jamf could expand what’s accepted fordatetypes to accommodate more date time representations or at least in the short term document the fact you need normalize all times to UTC before sending. - “Ability to customize order of display fields in Reports (JN-I-22451)” – Another “oldie but goody” from Jan 22, 2014 (Happy belated 11th Birthday!) it has 575 votes and has to be one of the most requested features that’s still under “Future Consideration” (again, the future is now Jamf). If we have to make an extension attribute to get some truth for Last Inventory can we at least have it show up somewhere we’d like? This request has many siblings, some that should be merged here, here, here, here and ones that were merged here and here.

- Oh yeah, almost forgot, you cannot “Retain Display items when Cloning Saved Advanced Search (JN-I-22827)“

I thought I was going crazy after I’d made a series of reports by cloning, only to find the “clones” had only copied the search criteria but not the all those Display items I’d spent time checking off – that I had to do again and again.[Edit: I might have misremembered and you have to uncheck the default Display items you don’t want re-enabled in your “clone” so this still holds true] Aye, what kinda “clone” is that?! It’s not! This “idea” is labeledNot likely to implementbut hey, you can still leave your angsty comments in memoriam like me, vote it up, and pour outta 40. 🪦🍻 [ Edit: Yes, these are “first world” nerd problems, I know. Blame it on the bad early 2025 vibes.]

Where was I? Oh right, the thing…

In closing, add zLast Inventory (Actual) as an extension attribute to your Jamf. You never know when you might need a bit of reality for Last Inventory in your Jamf reports and searches.